I (Not) Robot

First of all, neither this paragraph nor any subsequent paragraphs will be generated by ChatGPT the artificial intelligence tool that can mimic human speech and write well enough that the Guardian believes “[p]rofessors, programmers and journalists could all be out of a job in just a few years.” That particular bit has been done to death.

Here at home, Saltwire educational commentator Grant Frost is far less hysterical than the Guardian, although he does confess to finding the technology “unsettling.” His bottom line is that it’s here, it’s a “game changer” and education will have to adapt to it. He’s even open to it becoming an educational tool.

Here at home, Saltwire educational commentator Grant Frost is far less hysterical than the Guardian, although he does confess to finding the technology “unsettling.” His bottom line is that it’s here, it’s a “game changer” and education will have to adapt to it. He’s even open to it becoming an educational tool.

I heard another commentator come to a similar and yet decidedly more interesting conclusion about ChatGPT this week. I was listening to Douglas Rushkoff’s Team Human podcast (I’ve cited Rushkoff before, he’s the author and documentarian and educator who explained the problem with venture capital for me) and his thoughts about ChatGPT were so refreshingly different, they stopped me in my tracks.

Rushkoff explained that in the eight years he’s been teaching at a public college he’s “occasionally” encountered papers that have been “cut and pasted” from essays and articles or Wikipedia, but these were “relatively easy to spot,” requiring just a web search for a particularly suspicious sentence.

He also acknowledged that some students purchase their papers with the cheeky suggestion that for now, while it’s free, ChatGPT “kind of levels the playing field” by giving students who don’t have the money to “pay for a bespoke paper from an anonymous grad student gig worker” the opportunity to “produce and submit essays they haven’t written.”

He said he’d encountered his first AI-generated paper recently and while the sentences were clearer and the organization better than those of the papers actually “written” by his students (which he said are usually created through voice-to-text apps on iPhones without proofreading):

…to an experienced essay reader, they all exhibit these telltale signs of synthetic production—the depth of analysis, it remains exactly constant, there’s no ‘Aha!’ moments, no incomplete thoughts, no wrestling with ambiguity. It all reads like Wikipedia—no doubt where much of the thinking by the AI has been derived, at least, indirectly.

But he acknowledges that the technology is likely to improve and said that if “as accrediting institutions we need to enforce basic academic integrity” then there are “a few good alternatives” for doing so.

Color-coded

The first is technological and involves programs like Gltr, a free, online tool that provides “a forensic analysis of how likely an automatic system has generated a text.”

Gltr’s creators:

…make the assumption that computer generated text fools humans by sticking to the most likely words at each position…In contrast, natural writing actually more frequently selects unpredictable words that make sense to the domain. That means that we can detect whether a text actually looks too likely to be from a human writer!

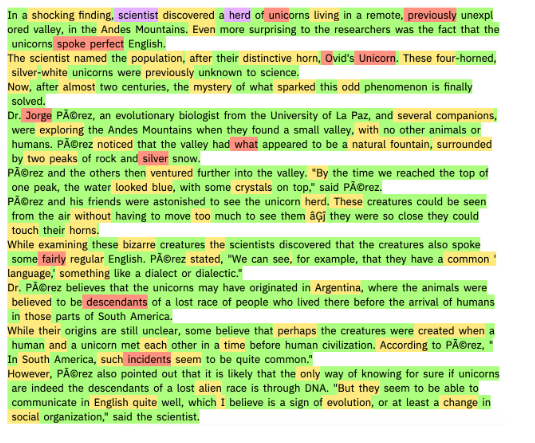

You paste your text into Gltr which analyzes it according to “how likely each word would be the predicted word given the context…If the actual used word would be in the Top 10 predicted words the background is colored green, for Top 100 in yellow, Top 1000 red, otherwise violet.”

I pasted the first paragraph of my own Fast & Curious item about venture capital into it (yes, I realize, this is also a bit) and it looks like this:

The violet for “Innov” and “Nova” doesn’t really count, they’re “named entities” which I think makes them naturally less predictable, but the other violet does count, as does the healthy sprinkling of red. By way of comparison, the Gltr creators ran some computer-generated text through their system and, aside from the “prompt,” that is, the first line of the text, the one fed into the computer by a human, the rest was far less colorful:

The result:

We can see that there is not a single purple word and only a few red words throughout the text. Most words are green or yellow, which is a strong indicator that this is a generated text.

The authors do add a disclaimer though, which echoes Rushkoff’s conviction that the language tech is likely to improve:

This version of GLTR was made in 2019 to test against GPT-2 text. It might not be helpful to detect texts for recent models (ChatGPT).

Human engagement

Rushkoff isn’t completely sold, anyway, on this alternative, which he likens to “a technological arms race against cheating students.”

The problem of “students submitting fraudulently produced papers” he said, points to “a more fundamental issue with how we do education.” Europeans, he argued, have a much different approach:

For them, the essay submitted by a student is not the culmination of a semester’s work, but the starting place for a conversation.

I’m going to quote his conclusion at length because I don’t think it would benefit from me paraphrasing it:

I understand why we might want to give competency exams to paramedics and cab drivers before entrusting them with our lives, but a liberal arts education? It’s not a license to practice, it is an invitation to engage with ideas, with culture and society. That’s a hard culture to engender with 50 or more students in a seminar, or several hundred in a lecture, particularly when many colleges can no longer afford teaching assistants or graduate students to help read papers. It’s even harder when students are showing up more for the credit than for the learning. But the only truly workable response to a student population that has turned to AI to produce its papers is to retrieve the time-consuming, face-to-face interaction that for me, anyway, constituted the most memorable moments of my education: yes, I’m talking about live conversations with students about ideas, their perspectives on what they’ve read, or even their responses to my questions about their work.

If we want students to “bring their human selves to the table,” he said “we have to create an environment that engenders human engagement.”

This is a common (and I think comforting) refrain among all the tech skeptics I listen to these days, that problems caused by tech can be solved by being more, not less, human.

Solidarity

I didn’t plan it this way, but that item actually allows me to segue far more smoothly than is my wont into this next one, which is the strike by Cape Breton University Faculty—seen here picketing Premier Tim Houston’s “State of the Province Address” (co-hosted by CBU) at the Port of Sydney this morning:

Source: Twitter

More precisely, I want to discuss a speech Professor Rod Nicholls, a member of the university’s bargaining committee, gave to CBU students yesterday.

After telling the audience the pay increase CBU was offering faculty would make them the second-highest paid in Nova Scotia and that it would be unreasonable for them to want more than other educators made, Nicholls said he himself made $150,000 a year:

I work in a department where I earn three or four times the salary of hard working, dedicated, well-educated staff. Is that fair? Not really. Why does it happen? Because, why does a soccer player and a singer earn so much money relative to bus drivers and nurses? That’s just the way of the world, you can’t get around that.

I don’t understand how he can believe both these things at the same time—equality matters when you’re comparing salaries at one university to salaries at another university but not when you’re comparing salaries within a single university?

But what really irked me was his message to students, which was that our capitalist system (which is what he’s talking about) with its inherent inequalities is the natural state of things and cannot be questioned, let alone changed. It’s a speech I can imagine a 9th century feudal lord giving to a vassal, “I live in a castle and you live in a hovel because that’s just the way of the world, you can’t get around that.”

How do you negotiate with someone who comes to the table convinced the world is naturally unfair and there’s nothing to be done about it?

You can watch the entire presentation to CBU students on the Caper Times Instagram account. Nicholls comes on at about the 30 minute mark:

View this post on Instagram