Wen Mr Clevver wuz Big Man…they had evere thing clevver. — Russell Hoban, Riddley Walker

Last month, I explored the potential political impact of a newly-commissioned study by the American National Academies of Sciences on the environmental effects of nuclear war. This month, I turn to a recently-concluded study into a subject – the weaponization of artificial intelligence – with the potential to increase the chances of such an apocalypse.

The National Security Commission on Artificial Intelligence (NSCAI) – which submitted its final report to President Biden on March 1 – was established by Congress as part of the $716 billion 2019 National Defense Authorization Act, named after the late Republican Senator John McCain. McCain was in some ways – most notably, in his endurance and denunciation of torture – a brave and principled figure.

But he was also a fanatic advocate of American military supremacy – and adventurism – and would have doubtless approved of a study “to consider the methods and means necessary to advance the development of artificial intelligence, machine learning, and associated technologies by the United States to comprehensively address national security and defense needs.” To consider, that is, not whether we should go down such a path, but what we ‘need’ to blaze a trail to the artificially intelligent, autonomously orchestrated, algorithmically programmed ‘battlefield of the future.’

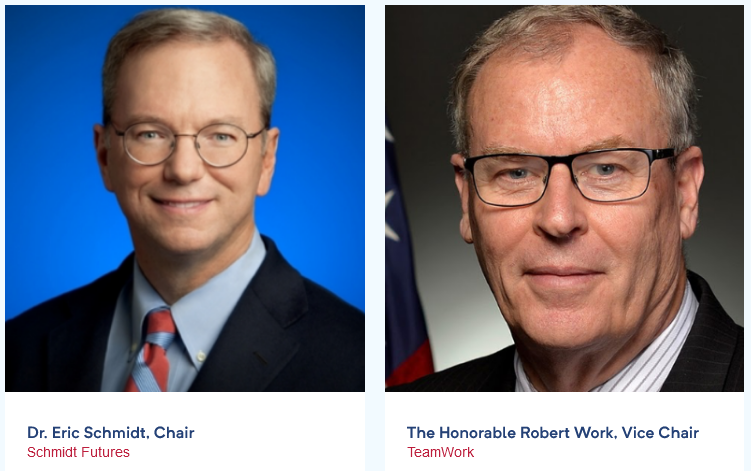

To safely reach these predetermined conclusions – to steer, to be frank, this rigged ship to shore – a trusty crew of 15 — was recruited, largely from the elite ranks of US tech giants with much to gain (killings to make) from such a brave new world of war. The Commission was chaired by Eric Schmidt, Google’s chief executive from 2001-2011, now president of Schmidt Futures (‘We Assemble People’); the vice chair was Robert Work, deputy secretary of defense under President Trump, and now president of TeamWork, a consultancy specializing in “military-technical competitions,” “revolutions in war,” and “the future of war.” A mere 11 of the other 13 commissioners have multiple, interwoven ties to a somewhat incestuous family of mega-corporations including Oracle, The Walt Disney Corporation, In-Q-Tel, Intel, Hewlett-Packard, Nortel Networks, Microsoft, Amazon, Lockheed Martin, Raytheon, Bell, IBM and (again) Google.

Only two commissioners, Steve Chien and Mignon Clyburn, do not hail from the land of capitalist Big Tech and Data, though Dr. Chien’s work for NASA and the Artificial Intelligence Group at the Jet Propulsion Laboratory, California Institute of Technology, is doubtless dependent in part on the technology (and perhaps funding) of these cyber-Colossi. Clyburn is a public servant long-dedicated to “closing persistent digital and opportunities divides that continue to challenge rural, Native, and low wealth communities;” a truly vital mission, though I suspect not one featuring prominently in the Commission’s core, Pentagon-focused inquiry.

A closer look at two Commissioners will confirm more clearly the entrenched nexus of interests and outlook – wealth and worldview – motivating the ‘independent’ panel. In addition to serving as an advisor to one of the world’s biggest arms companies, Raytheon, Katharina McFarland has spent much of her career buying weapons for the Pentagon, serving as assistant secretary of defense for acquisition, and assistant secretary of the army for acquisition, logistics & technology, under Presidents Obama and Trump, as well as director of acquisition at the Missile Defense Agency – notorious for flawed testing and chronic techno-hype — under Presidents Bush (II) and Obama. But how can one question the impartiality of a recipient of the US Navy’s apparently prestigious Civilian Tester of the Year Award, and a past-president of the Defense Acquisition University (a “corporate university” established by the Pentagon to “develop” the vast cadres of “acquisition, requirements and contingency professionals” needed to “deliver and sustain effective and affordable warfighting capabilities?”

Dr. Andrew W. Moore is head of the Google Cloud Artificial Intelligence Division. His “research interests encompass the field of ‘big data’,” the application of “statistical methods and mathematical formulas to massive quantities of information, ranging from web searches to astronomy to medical records, in order to identify patterns and extract meaning.” In addition – and precisely by honing these algorithmic methods – Moore has spent years “improving the ability of robots and other automated systems to sense the world around them and respond appropriately.”

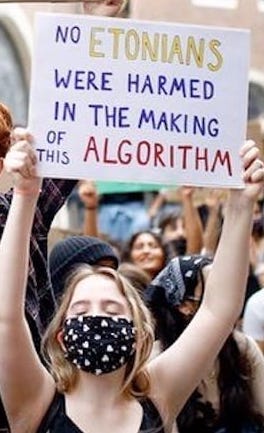

Moore’s work takes us to the dark, killer-robot heart of the Autowars project, for the Almighty Algorithm is, on closer moral inspection, both a false and flawed Idol, a purported triumph of objective rationality (sub-)routinely masking subjective presumption and prejudice. This mathematization of privilege – the projection of power as ‘science’ – is already widely used, for example, in the ‘predictive policing’ of racialized minorities, and was on grim display in Britain last year when an algorithm for adjusting exam results during the COVID-19 lockdown ‘found’ that the better-off you were, the better you did, while the poorer your background (and school), the poorer you ‘really’ performed. As a popular protest sign put it:

No Etonian was damaged in the making of this algorithm.

In an outstanding recent essay, Autonomous Weapons and Patriarchy, Canadian scholar-activist Ray Acheson:

…situates the development of autonomous weapon systems in the broader context of the control of human lives globally through the rise of the digital and physical ‘panopticon’ – a system of surveillance, control, incarceration, and execution that asserts the dominance of the political and economic elite over the rest of the world.

Ray Acheson, Photo by: Chuck Gomez

For this reason, “the operation of weapons programmed to target and kill” will inevitably be “based on pre-programmed algorithms” aimed at “people who are racialized, gendered, and otherwise categorized.” It is thus, she argues, important to confront autonomous weapons:

…not just as material technologies that need to be prohibited, but as manifestations of the broader policies and structures of violence that perpetuate an increasing abstraction of violence and devaluation of human life.

The Commission’s findings and recommendations perfectly reflect this devaluation, graphically (and there are lots of graphs) illustrating the confluence of military-industrial hunger for profit and the high technological fantasy that the real world can be ‘sensed’ by machines sometimes capable of acting more ‘intelligently’ – and, in the heat of virtual battle, quickly – than people.

Schmidt and Work are keen to stress that, most of the time, AI is benignly – panopticon? What panopticon? – “improving life and unlocking mysteries in the natural world.” Their only “fear” is that it may also “be used in the pursuit of power,” creating “weapons of first resort in future conflicts.” For which reason, America must maintain its own grip on power by preparing to conquer – by force, if need be – the new, artificial frontier:

Ultimately, we have a duty to convince the leaders in the US government to make the hard decision and the down payment to win the AI era.

Secretary-General Antonio Guterres. UN Photo / Jean-Marc Ferré

“We envision,” they prophesy, “hundreds of billions in federal spending in the coming years,” and sternly caution: “This is not a time for abstract criticism of industrial policy or fears of deficit spending to stand in the way of progress.” Nor, the Commission stresses, is it time for regulation, or God forbid disarmament, to frustrate such high hopes. No, a “new warfighting paradigm is emerging” – destined to “pit algorithms against algorithms” – “in which “advantage will be determined by the amount and quality of a military’s data, the algorithms it develops, the AI-networks it connects, the AI-enabled weapons it fields, and the AI-enabled operating concepts it enables to create new ways of war”. And because of these future ‘facts,’ it stands to reason that:

A global treaty prohibiting the development, deployment, or use of AI-enabled and autonomous weapon systems is not currently in the interest of the US or international security and would not be advisable to pursue for several reasons.

The chief objection is then candidly stated: any such treaty would commit the felony of “overly constraining” existing and potential “US military capabilities” – as indeed, one would hope it would, together with the capabilities of all states parties. The Commission also cites, as opponents of arms control always do, a number of concerns (over definition, scope, and particularly verification) that would indeed need to be addressed in the course of negotiations, but can hardly be allayed without them.

Advocates of a ban on fully-autonomous weapons – including 30 states and UN Secretary-General António Guterres – reacted to the NSCAI study with horror. “This is,” argued Professor Noel Sharkey of the Campaign to Stop Killer Robots, “a shocking and frightening report that could lead to the proliferation of AI weapons making decisions about who to kill.”

“The most senior AI scientists on the planet,” he continued, “have warned them” – meaning not just the US but all nations wading into the Rubicon – “about the consequences, and yet they continue. This will lead to grave violations of international law.” The Commission’s executive director, Yll Bajraktari, claims at the beginning of the report that: “We met with anyone who thinks about AI, works with AI, and develops AI, who was willing to make time with us.” I strongly suspect Professor Sharkey – or Ray Acheson – would have been willing to ‘make time’: why wasn’t he, she, or the other scholars he mentions, invited?

On November 11, Armistice Day, 2020, the Campaign to Stop Killer Robots received the Ypres Peace Prize, allotted by Ypres City Council to individuals and groups striving to prevent future conflagrations on a par with or exceeding World War One. In the view of the campaign, the prize is “a clear example…that public concerns over killer robots are growing and demands for recognition must be realized”: “The Campaign urges Belgium and other nations to launch negotiations to ban fully autonomous weapons and retain meaningful human control over the use of force.”

Even the Commission agrees that such ‘meaningful control’ should be retained in one case: “The United States,” it argues, should – while seeking “similar commitments from Russia and China” – “make a clear, public statement that decisions to authorize nuclear weapons employment must only be made by humans, not by an AI-enabled or autonomous system.” A chronic triple flaw, however, fatally weakens this seemingly strong position.

Source: YouTube

First, it sidesteps the extent to which (mis)information from AI and autonomous systems may influence or even dictate human decision-making in a crisis, or may even falsely generate such a crisis.

Second, it downplays the degree to which the limitations of existing technology (risk of malfunction; susceptibility to hacking) already dehumanizes decision-making, particularly when so many nuclear weapons are deployed on a state of alert, ready to launch-on-warning. True, human judgement has so far – just – prevented false alarms from starting actual wars, and it would obviously be insane to remove what’s left of the human factor from the equation. But what kind of ‘safeguard’ is it to place the fate of the Earth in the hands of one – quite possibly, mentally unstable – leader, or even a military commander, panicking on a battlefield or stranded, incommunicado, in a submarine?

And third, the Commission seems blind to the danger that an AI-enabled conventional offensive – almost certain to feature a cyber-blitzkrieg against military and civilian infrastructure – could be confused with the beginning of a nuclear attack, interpreted as its prelude, or deemed sufficiently devastating to warrant a nuclear response. The 2018 US Nuclear Posture Review countenances just such retaliation to four types of non-nuclear attack (or threat): biological, chemical, conventional – and cyber. And while the initial response may involve the ‘tactical’ use of so-called ‘low-yield’ weapons – up to 5 kilotons, a third of the Hiroshima Bomb! – the risks of thermonuclear escalation are (or should be) obvious. (For a video presentation of how such a chain-reaction might occur, see the International Campaign to Abolish Nuclear Weapons (ICAN).)

Similarly radioactive ‘logic’ is on display in the British government’s new ‘Integrated Review of Security, Defence, Development and Foreign Policy,’ entitled – in swaggering Brexiteer fashion – Global Britain in a Competitive Age. Having announced without explanation a steep increase in its stockpile of nuclear warheads – raising the cap from 180 to 260, perhaps to add some ‘low-yield’  options? – the review reiterates Britain’s long-standing vow never to use nuclear weapons against non-nuclear-weapon states. And then adds a chilling “However”:

options? – the review reiterates Britain’s long-standing vow never to use nuclear weapons against non-nuclear-weapon states. And then adds a chilling “However”:

…we reserve the right to review this assurance if the future threat of weapons of mass destruction, such as chemical and biological capabilities, or emerging technologies that could have a comparable impact, makes it necessary.

Autonomous AI (a prospect fervently embraced in the review) is almost certainly one of these ‘emerging technologies,’ meaning that, under this new, deliberately ill-defined doctrine, the use against Britain of the very weaponry it covets will make ‘necessary’ the nuclear destruction of a potentially non-nuclear state. (How comforting, though, that that decision will still be taken by a human being; and perhaps an Old Etonian, at that!)

How did any of this become remotely thinkable? In 1988, nearly a third of a century ago, Soviet President Mikhail Gorbachev warned the United Nations that the continued “militarization of human thought” was no longer compatible with organized, civilized life on Earth. “The very idea,” he declared, “of the nature and criteria of progress is changing. To assume that the problems tormenting humankind can be solved by the means and methods that were used or that seemed to be suitable in the past is naïve.” For this reason, it was “evident” that “force or the threat of force,” nuclear or conventional, “neither can nor should be instruments of foreign policy”: that “disarmament” was now “the most important thing of all, without which no other issue of the forthcoming age can be solved.”

Millions of people looked at the same evidence and reached the same conclusion. They didn’t need an algorithm to tell them: if the modern world has a future, modern warfare doesn’t.

CODA

The article opens with a quote from Russell Hoban’s remarkable 1980 novel Riddley Walker, written in the stunted language of a post-apocalyptic ‘Inland’ (England), laid waste centuries before by  “a flash of lite…bigger nor the woal worl [whole world]” which “ ternt the nite to day”:

“a flash of lite…bigger nor the woal worl [whole world]” which “ ternt the nite to day”:

Then every thing gone black. Nothing only nite for years on end. Playgs [plagues] kilt people off nor there wernt nothing growin in the groun. Man and woman starveling in the blackness looking for the dog to eat it and the dog out looking to eat them the same.

The calamity came courtesy, so the myth ran, of ‘Mr Clevver,’ the ‘Big Man’ who for so long had counted on one thing above all – counting; calculating – for his success:

Cudnt stop ther counting which wer clevverness and making mor the same. … They had machines et [ate] numbers up. They fed them numbers and they fractiont out the Power of things.

As his name suggests, ‘Mr Clevver’ proved a little too clever for his own – or anyone else’s – good. His intelligence was, it’s true, incredible.

It just wasn’t real.

Sean Howard is adjunct professor of political science at Cape Breton University and member of Peace Quest Cape Breton. He may be reached here.